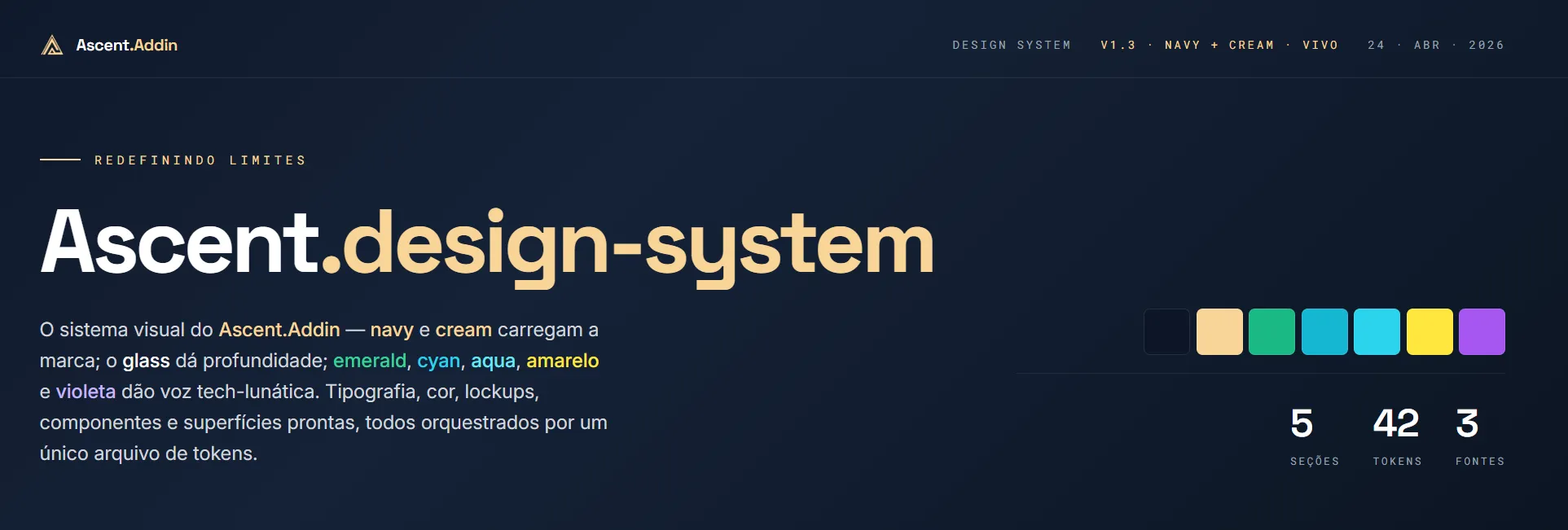

How I used Claude to build Ascent's Design System

I dropped Ascent's repo into Claude Design and asked it to reverse-engineer my brand. The output became the DESIGN_SYSTEM.md every agent reads.

TL;DR

I dropped Ascent’s entire repo into Claude Design and asked it to give me back a design system. In minutes, I got a navigable project with HTML, CSS, and tokens. Then I used Claude Code to compile everything into a single DESIGN_SYSTEM.md that every AI agent reads before producing anything for the brand. Today, Ascent’s identity no longer lives only in my head.

The hook

A design system isn’t a pretty PDF sitting in a Drive folder no one opens.

It’s infrastructure.

And it took me too long to understand that.

The tension

A few days ago, I was trying to ask Claude to generate an Ascent post. I gave it the brief, explained the palette, described the voice. The result was decent. I asked again a week later. Completely different output: a different shade of blue, a different tone of copy, a different title style.

The problem wasn’t Claude. It was me.

Ascent’s identity existed only in my head. The colors “shifted over the years” (I saw it myself in old slides: #141F2B, #152238, #0D1628 - what was the right blue, again?). The voice was roughly what I remembered it being. The typographic rules were intuitive to me and to no one else.

Then AI agents entered the picture, and the problem became catastrophic.

Without clear rules written somewhere a machine could read, every prompt produced a different version of Ascent. Today it came out purple. Tomorrow beige. The day after, yellow. A brand that becomes a lottery doesn’t claim space in the market.

And worse: I couldn’t scale. Every contractor who came in needed 30 minutes of verbal briefing. Every new agent needed a monumental prompt at the start of the conversation. Unsustainable.

I had to turn the implicit into the explicit. Once. In a way both humans and machines could read.

Building it

What I did (and how long it took)

I decided to test a hypothesis: what if I dropped everything I had into Claude Design and asked it to reverse-engineer my own brand?

I uploaded into Claude Design:

- The frontend repo of Ascent.Addin (with all the CSS, Angular components, tokens)

- The old visual identity (PDF, logos, swatches)

- Instagram posts I considered “on point”

- Notes scattered across Notion and Git commits

- Pitch decks I’d shown investors

No briefing. No voice instruction. No explanation. Just the material.

In minutes, Claude Design handed me a complete, navigable design system: HTML, CSS, tokens, components, pages cross-linked. It had palette, typography, component guide, voice rules, copy examples. Better than anything I would’ve stitched together in Figma over 3 weeks.

But then problem #2 showed up.

AI agents don’t navigate websites. Agents read text.

The system was beautiful, navigable, and totally useless to any LLM. I needed a second step.

Compiling via Claude Code

I used Claude Code to read the entire project and compile everything into a single Markdown file: DESIGN_SYSTEM.md. That file is dense. Today it’s 881 lines long. It covers color palette with hex and CSS tokens, type scale, canonical components, authorized gradients, voice rules in two modes (founder and institutional), canonical copy, exact product naming, anti-error checklist, and much more.

And that file ships with every prompt.

Every time an Ascent agent has to produce something for the brand, it reads DESIGN_SYSTEM.md first. Every time I ask for a post, slide, or landing, Claude already knows: Navy #0D1628, Cream #F9D592, Space Grotesk for display, Inter for body, Roboto Mono for technical eyebrows. It knows the blog is founder mode (first person) and the landing is institutional mode (third person). It knows emerald is exclusive to the // FREE badge and download button. It knows it never mixes the two voice modes in the same block.

I stopped explaining the brand on every prompt.

Why it works (the technical principle)

There’s research published by Hardik Pandya that captures what happens when you expose a design system to LLMs in a structured way. The core point: LLMs fabricate values where they find no rules. Without a closed token layer, the model picks any blue. Without a voice spec, it averages copy tones until something mediocre comes out.

The fix isn’t a verbal briefing per prompt. It’s a closed token layer plus a spec file the model loads before any brand task.

That’s exactly what DESIGN_SYSTEM.md does. There’s no blue to fabricate because var(--brand-navy) exists. There’s no voice tone to improvise because §3 has a per-channel decision table, canonical examples, Do/Don’ts, and reusable copy.

The practical result Hardik documents on his Atlaskit project was reducing 418 hardcoded values to zero and ensuring “the 10th session produces the same visual quality as the 1st.” For me, the leap was simpler: every carousel, every blog post, every Ascent landing now ships consistent, even when it’s me, a contractor, or an autonomous agent running the prompt.

The structure that worked: Skills in Claude Code

What made a huge difference was turning DESIGN_SYSTEM.md into a Skill in Claude Code.

Quick primer: Skills are filesystem-based resources that extend what Claude can do. They’re like modular expertise packs. You create a SKILL.md file, drop in the instructions, and Claude loads the full content only when needed. That solves a critical problem: it doesn’t burn the context window prematurely. Claude knows the skill exists and when to use it, but only pulls the full content at use time.

For Ascent, I created the ascent-design-system skill. Any agent producing brand artifacts invokes it first. The skill fetches DESIGN_SYSTEM.md via gh api (the repo is private), caches it locally, and the agent reads the file before writing a single word.

That’s brand governance through infrastructure, not verbal briefing.

What the W3C and the design tokens community say

In October 2025, the W3C Design Tokens Community Group released the first stable version of the Design Tokens spec (2025.10). Big milestone: for the first time, there’s an open, stable standard for how tokens should be defined and exchanged across tools, codebases, and platforms.

Tools like Style Dictionary and Tokens Studio already support it. Adobe, Google, Meta, Figma, Shopify, and Salesforce are among the orgs that contributed.

What that means in practice: when I use var(--brand-navy) in DESIGN_SYSTEM.md, I’m not making anything up. I’m following the semantic-token logic (token describes purpose, not raw value) that the global design systems community converged on as standard.

Semantic tokens beat primitive names. --color-cta-primary teaches more than --blue-500. Because the LLM learns not just which color to use, but why that color exists.

Three rules I learned in the process

Along the way, decisions that looked small turned out to be critical.

1. The agent palette is different from the full product palette.

Ascent’s full design system has many more colors: cyan, aqua, yellow, violet. Those “live voices” exist for different modules and contexts. But for agents, I authorized only 4: Navy, Cream, Paper, Emerald. Fewer choices mean fewer chances to be wrong. An agent with 15 colors guesses. An agent with 4 colors gets it right.

2. Two voice modes, never mixed.

I learned the hard way that the brand speaks in two completely different modes. On Instagram, the blog, and ads: founder mode (first person, “I, my, with me, let me tell you”). On the site, landing pages, and technical docs: institutional mode (third person, “Ascent, we, our”). Mixing them in the same copy block sounds schizophrenic. I formalized this in DESIGN_SYSTEM.md §3 with a per-channel decision table, canonical examples, and a Do/Don’t list.

3. The file has to be the single source of truth, not one reference among many.

If DESIGN_SYSTEM.md is one reference among many, the agent guesses which one wins. When I declare the file the canonical source (with explicit hierarchy: tokens.css above DESIGN_SYSTEM.md, above the old PDF, above free interpretation), agent behavior changes. They stop inventing and start consulting.

Key insights

- Design system as operational infrastructure: not an internal communications artifact. It’s the one file every agent needs to read before producing anything for the brand.

- Semantic tokens close the fabrication space: LLMs fabricate values where they find no rules.

var(--brand-navy)leaves no room for error; “dark blue tone” does. - Restricted palette for agents: authorize fewer colors than the full system has. The agent with 4 colors is more consistent than the agent with 15.

- Two voice modes with a per-channel decision table: social and blog use founder mode (first person); site, doc, and landing use institutional mode (third person). Never mix them in the same block.

- Skills as brand governance: turning

DESIGN_SYSTEM.mdinto a Claude Code Skill ensures the content loads only when needed and that any new agent inherits the rules automatically.

How to apply this

If you want to do the same, the process is direct:

Step 1. Gather raw material. Pull together everything that documents your brand, even implicitly: code repo with existing CSS/tokens, old visual identity, posts you consider “on point,” scattered notes, decks, briefs you’ve given verbally.

Step 2. Drop it into Claude Design (or a multimodal equivalent). Upload the material and ask: “read all this and give me back a complete design system, with palette, typography, voice, components, and per-context application rules.” Don’t write an elaborate prompt. Let the material speak.

Step 3. Compile to Markdown. Use Claude Code (or any code agent) to read the generated project and compile it into a single DESIGN_SYSTEM.md. That file ships with every prompt.

Step 4. Define a restricted agent palette. Explicitly separate, in the file, which colors are authorized for agent-generated pieces. Less is more.

Step 5. Document the two voice modes. Write it in the file, with canonical examples, per-channel decision table, and a Do/Don’t list. That’s what contains voice hallucinations.

Step 6. Turn it into a Skill. If you’re using Claude Code, create a Skill that points to the file. Any new agent inherits the rules without manual briefing.

Step 7. Maintain with absolute dates. Whenever the system evolves, update the file with version and date. Mine is at v1.3, dated 2026-04-24. Agents need to know which version they’re reading.

How are you documenting your brand for AI today? Still PDF in a Drive folder, or already compiled into

.md? Drop a comment in Discord.

Hit me up on Instagram.

No comment box yet. Drop me a line and I'll reply.

@pabllodantas